Adaptive Models 7328769733 Designs

Adaptive Models 7328769733 Designs embed continuous learning and dynamic adjustment to shifting data, objectives, and contexts. They emphasize monitoring data drift, modular architectures, and rigorous benchmarking to sustain performance and safety. Governance, anomaly detection, and real-time oversight balance exploration with accountability. The approach claims resilience across industries, yet questions remain about governance sufficiency, scaling constraints, and long-term societal impact as adaptation accelerates. The next consideration is where these tradeoffs most clearly reveal themselves.

What Adaptive Models Are and Why They Matter

Adaptive models are frameworks designed to adjust their structure or parameters in response to changing data, objectives, or contexts. They enable targeted responsiveness by formalizing learning loops that monitor data drift, recalibrate model adaptability, and enforce safety constraints.

This perspective clarifies why adaptive models matter: they sustain performance, anticipate shifts, and balance autonomy with oversight, fostering resilient, freedom-aligned decision processes.

Core Design Principles for Robust, Data-Driven Adaptability

Designing robust, data-driven adaptability hinges on a set of core principles that ensure models remain effective amid evolving inputs and objectives.

The discussion emphasizes adaptive governance and transparent model governance, enabling ongoing auditing, accountability, and alignment with values.

Rigorous benchmarking, modular architectures, and continuous learning safeguards support resilience, while dissenting signals and anomaly detection preserve integrity without constraining creative exploration.

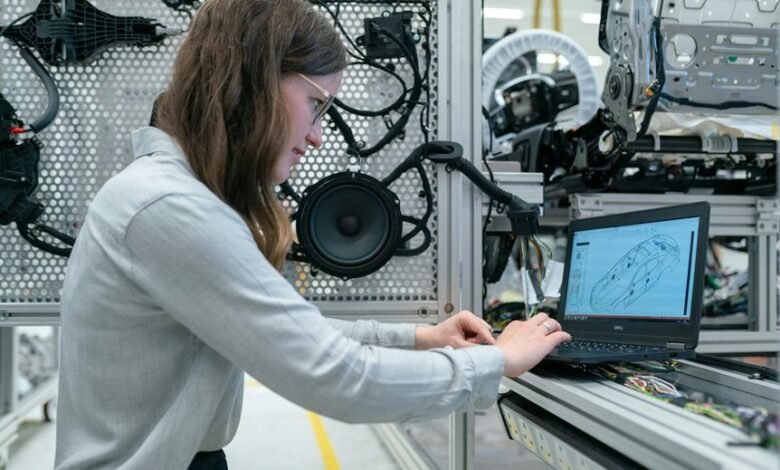

Real-World Deployment: Use Cases Across Industries

Real-world deployment across industries reveals how adaptive models translate data-driven insights into operational value, balancing performance with governance and resilience. Across sectors, implementations prioritize real time governance and bias mitigation, ensuring transparency and accountability while sustaining agility.

Analytical evaluation identifies domain-specific constraints, interoperability challenges, and measurable impact, guiding iterative refinement. The result is resilient systems that adapt responsibly without compromising safety or autonomy.

Building, Evaluating, and Scaling Adaptive Models Responsibly

The analysis emphasizes model governance and ongoing monitoring for data drift, ensuring transparency and accountability.

Rigorous evaluation, iterative validation, and scalable deployment enable principled adaptation, balancing innovation with safety, freedom, and societal impact.

Conclusion

Adaptive models that continuously adjust structures and parameters demonstrate resilience amid shifting data and objectives. The integrated loops for monitoring drift, recalibration, and governance underpin trustworthy adaptability across domains. Scalability hinges on modular design, rigorous benchmarking, and transparent oversight. While exploration motivates discovery, accountability anchors safety. In the end, one adage holds: measure twice, cut once. A disciplined balance between innovation and governance yields robust, ethical, and impactful deployments.